As technology advances ever forwards we are seeing increasingly vast and detailed amounts of data being pushed through web browsers, and as a result, both sensitive and personal data is being indexed by search engines.

It is the duty of all businesses in the UK to understand and protect an individual’s personal data under the Data Protection Act 1998 and under European Legislation, General Data Protection Regulation (GDPR). In the event of a breach of the GDPR, a company can be fined up to the greater of 20 million euros or 4% of a company’s annual global revenue, depending on the seriousness of the breach.

Companies that use and rely on software providers, including both proprietary and Free Open Source Software (FOSS) will be held accountable for their own actions. FOSS licences, such as the GNU or BSD licenses, disclaim all warranties and liabilities, placing responsibility on the user of the software.

It is important for the architects of such software to assess and provide information about protecting sensitive data when default settings are applied – including what should be uploaded and what should not.

Search Engines such as Google and Bing have becomes extremely good at finding new documents on the Internet. Perhaps even too good? They find documents that we might hope they wouldn’t.

We touched upon Dorking back in 2016, when we gave reasons why companies should use these techniques to penetration test their own content management systems and online infrastructure.

(We often work on Bug Bounty Programs and consult to help companies improve their security on the Internet, in an ethical way.)

Who is affected by leaking data?

From the extensive research that we have carried out, we have found data being leaked on search engines, from small, independent shops, right the way up to multi-billion pound company groups.

From employment records to passport details, user, client and supplier data (with invoices exposed by each), almost everything can be found through search engines.

Some companies may use content management systems that attempt to automatically notify search engines of content that has been uploaded. Although this may be very beneficial for blog posts and landing pages for Search Engine Optimisation, it represents a legitimate security concerns for sensitive data.

Content Platforms

Builtwith.com shows that 51% of the top one million websites are using just one of many FOSS content management systems. Companies that use content management systems need to assess their current security policies, along with verifying any plugins that they might use.

A company that leaks information about its suppliers or customers may be in breach of their Non-disclosure Agreement (NDA). Documents such as invoices may contain sensitive information about their relationship and financial transaction information.

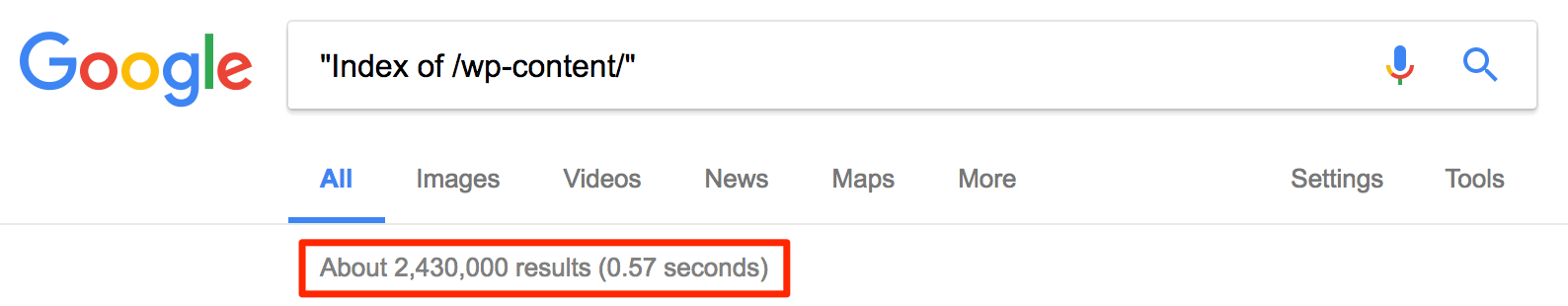

Consider the following search in Google, it shows the entire directory structure along with all associated uploads:

"Index of /wp-content/"

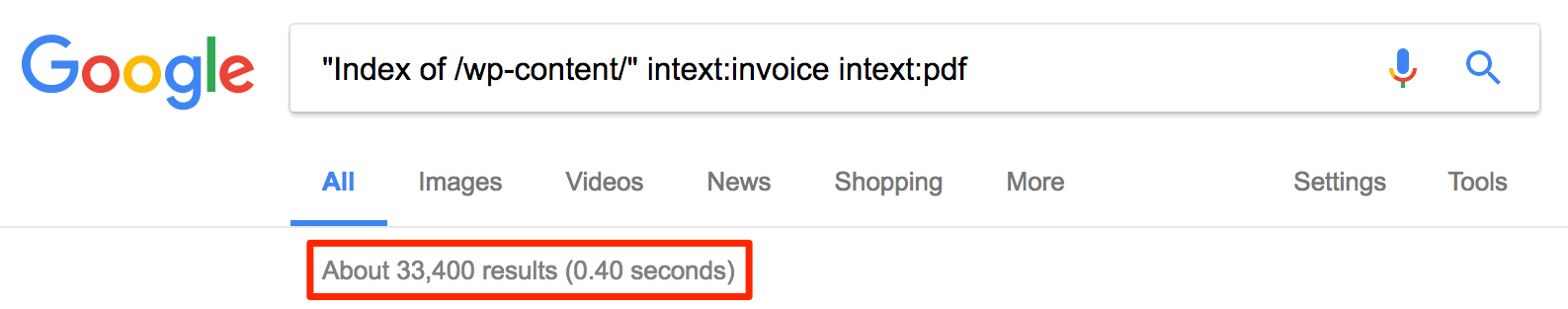

Scoping that query to invoice data is quite simple:

"Index of /wp-content/" intext:invoice intext:pdf

@JonoAlderson shared with us the potential for a media vulnerability based on his own domain, jonoalderson.com. The URL path /wp-json/wp/v2/posts shows the entire directory of uploads which could be used by a bad actor for discovering any sensitive assets that may have been uploaded. Jono does not use the website as a content management system for storing sensitive data, but small business owners may do so without realising.

Leaked invoices

During our investigation, we found instances of leaked invoices, one being one of our very own purchases!

This was caused by a vendor not only leaking customer data onto Google, but also allowing the said data to be handled over a HTTP connection.

An example of the original URL was: https://my.example.com/1234-5678-9101-1121

By stripping off the Universally Unique Identifier (UUID) at the end of the URL and removing the HTTPS protocol, I was able to ask Google about what it knew in regards to the (sub)domain:

site:my.example.comGoogle stated that there were three URLs indexed, which appear to have had the same long UUID URLs.

This may not seem like a lot, and the case is unfortunate for those three customers. Helpfully, the issue was caught in the early stages and the company has been notified so that it can fix the problem.

There are other cases of this happening online. In 2015, I discovered an airline company that was leaking sensitive data about its customers.

The data in question involved individual customer receipts, with each one containing a customer’s name, address, date range of their holiday, and the last four digits of the customer’s card details.

I notified the airline company, firstly going through its Chief Technology Officer and then its Head of Development. But after discussing the issue in-depth, I was told that the site was actually carrying out its intended functionality, and my warnings went unheeded. A recent Google search showed that the receipts are no longer available, so we can assume that they decided it was important enough to fix in the end.

Such information offers a paradise of opportunity for thieves, as even the most basic holiday information can provide an open window for criminals to access empty properties. This kind of information can also be used for social engineering scams.

Companies that provide accounting services and invoices should pay particular attention here, as they could be leaking important client data.

Examples of leaked document scans

It is common practice for companies to scan important information and documents for legal reasons such as Know Your Customer (KYC), anti-money laundering, and employment. Uploading those photographs and scanned documents to web applications is equally dangerous.

Google has image recognition capabilities which can be used to find similar files. A bad actor could upload a visually similar image and create Dorking queries around the image recognition. This technique applies to common forms that have sensitive documentation, such as P45 forms, visas, driving licences and passports.

With this in mind, customers should be holding their employers, suppliers, and vendors accountable for their information privacy.

How to check if your company is leaking data

At this point it is important to realise that there are ethical considerations in showing some of these techniques, even for educational purposes. Use them at your own risk.

Robots.txt files are a good way to find out what webmasters do not want you to discover. Obscurity is not security.

Take your invoice template and check for multiple strings on the page using Google’s site operators. Here are a few examples that I could check:

site:merj.com intext:"invoice" intext:"customer" intext:"total"

site:merj.com filetype:"pdf"

site:merj.com filetype:"xls"

site:merj.com filetype:"xlsx"

site:merj.com "sort code" "invoice date" "invoice" filetype:pdf -inurl:sample

site:merj.com "invoice" inurl:inv filetype:pdf

site:merj.com "payment advice" inurl:inv filetype:pdfThe last two are specific to a particular invoice generation software. I know that this system uses inv-.pdf as the pattern, and that another invoice generation company uses INV followed by padded six zeros INV0[00000].pdf. By using the following pattern, we can detect company details with less than 100,000 invoices used:

site:merj.com "invoice" inurl:inv0 filetype:pdf

site:merj.com "payment advice" inurl:inv0 filetype:pdfFor those running on popular blogging platforms, the wp-content directory often contains all uploads, including potential sensitive information. Here is one command to try:

site:yoursite.com "invoice" inurl:wp-content inurl:inv filetype:pdfGoogle provides a wealth of different of search operators. Start by checking simple operators, then work upon to create more precise expressions.

Once the string pattern has been determined, it is possible for a threat actor to use a brute-force attack. It is very important to note that brute-force attacks are illegal, so do not do it unless you are penetration testing your own website.

A brute force attack could be as simple as incrementing a counter that attacks URLs, for example:

https://example.com/receipts/1

https://example.com/receipts/2

https://example.com/receipts/3More sophisticated attacks can be performed with more knowledge about the system and the patterns that it follows. Using simple patterns like incrementing numbers make the system easier to attack.

How to prevent a brute-force attack

There are many sophisticated pieces of software that can help prevent attacks, such as Akamai, Incapsula and Cloudflare. In-house solutions could also be used. We suggest rate throttling areas with sensitive information to suitable conditions. Would a customer really try and access more than three invoices every five seconds?

If the user is attempting to access invoices with a high failure rate, consider banning the IP and returning a 429 header status.

At this point it is important to reiterate that companies need to take responsibility for their own security. Relying on open source solutions is not enough.

Solution #1 Require user to login to download/view data

If the customer is performing a ‘guest checkout’, a company can consider giving them a temporary user session to download invoices. Another possibility is to force them to activate an account after a short period of time in order to continue accessing invoices.

Some frameworks allow files to be passed through. For example, in Ruby on Rails, a send_data method is available and can be placed inside a controller, which manages the user authentication, but also sends the file if the authentication is passed.

def download

@invoice = current_user.invoices.find(params[id])

if @invoice.present?

send_data(@invoice.attachment, filename: “#{@invoice.title_safe}.pdf”)

end

endSolution #2 Use a timelock URL

Amazon S3 uses a time based authentication URL to limit how long the user can use a single URL before it is regenerated. It requires a secure hash of a time in the future against a secure key that only the server knows about.

It is really easy to replicate the functionality for custom solutions, and here is a working Ruby (2.4+) example, using the Sinatra library as a server. It is running on a Heroku instance.

The workflow involves the user visiting the generate URL, then a secret URL is created and the user is automatically redirected to that secret page, which they can access and the URL returns a 200 header status. After 10 seconds, the content is then hidden and the URL returns a 403 header status. Click refresh a few times to see the time counter in action.

You can clone the sample application from the MERJ-Timelock project on Github to get this running locally.

git clone https://github.com/rsiddle/merj-timelock

cd merj-timelock

bundle

foreman startOpen your web browser and visit http://localhost:5000/generate

It is important to note that the key should not be checked into a repository. Each user should also have a unique key that is not exposed. This ensures that users cannot simply time attack one another. A longer timeframe should be used for live websites, i.e. one hour.

Solution #3 Using Schema.org/Invoice & Google Search Console

I propose a third option which involves developers as well as Google helping to identify issues through Google Search Console.

The Schema.org/Invoice is available, which has details of a typical invoice. However, it does not seem to be used or even discussed much on the web with only 396,000 results (Google UK). Whereas Schema.org/Review has 89.5 million results (Google UK). Developers are often be asked to implement schema as the ever growing markup provides ways of parsing data. An example of the Invoice schema (Microformat) is:

<div itemscope itemtype="http://schema.org/Invoice">

<h1 itemprop="description">New furnace and installation</h1>

<div itemprop="broker" itemscope itemtype="https://schema.org/LocalBusiness">

<b itemprop="name">ACME Home Heating</b>

</div>

<div itemprop="customer" itemscope itemtype="https://schema.org/Person">

<b itemprop="name">Jane Doe</b>

</div>

<time itemprop="paymentDueDate">2015-01-30</time>

<div itemprop="minimumPaymentDue" itemscope itemtype="https://schema.org/PriceSpecification">

<span itemprop="price">0.00</span>

<span itemprop="priceCurrency">USD</span>

</div>

<div itemprop="totalPaymentDue" itemscope itemtype="https://schema.org/PriceSpecification">

<span itemprop="price">0.00</span>

<span itemprop="priceCurrency">USD</span>

</div>

<link itemprop="paymentStatus" href="https://schema.org/PaymentComplete" />

<div itemprop="referencesOrder" itemscope itemtype="https://schema.org/Order">

<span itemprop="description">furnace</span>

<time itemprop="orderDate">2014-12-01</time>

<span itemprop="orderNumber">123ABC</span>

<div itemprop="orderedItem" itemscope itemtype="http://schema.org/Product">

<span itemprop="name">ACME Furnace 3000</span>

<meta itemprop="productId" content="ABC123" />

</div>

</div>

<div itemprop="referencesOrder" itemscope itemtype="https://schema.org/Order">

<span itemprop="description">furnace installation</span>

<time itemprop="orderDate">2014-12-02</time>

<div itemprop="orderedItem" itemscope itemtype="https://schema.org/Service">

<span itemprop="description">furnace installation</span>

</div>

</div>

</div>By implementing even just the most critical parts of the Invoice markup, Google could inform webmasters if they detect the schema while crawling and indexing information. Such pages could be marked in Search Console as sensitive information and have a priority for being removed out of the index. As we know, not all pages are removed straight away and often hang around for days, weeks, or even months.

Ideally the webmaster should implement solution one or two off the back of this.

Summary

We are starting conversations with Open Source projects in the ecommerce space to increase awareness for securing data online and will discuss using techniques the described above. All companies should assess their systems to ensure they are not leaking sensitive data.

Searching for scanned documents, such as passports would require a different approach however. I call upon Search Engines to help with such solutions.

Search engines indexing sensitive information is not intended functionality.

Create systems that actively protect sensitive information. Companies should expect large fines for data breaches under the GDPR legislation that comes into effect on 25 May 2018.

Let me know your thoughts on Twitter @ryansiddle.

Need help securing your company? Send me an email to [email protected].